From the most basic software to the most innovative innovations, technology plays a major role in our everyday lives. Every website or piece of software we come across was created by a web developer, but what is web development and what does a web developer do?

To the untrained eye, it can appear to be a difficult, perplexing, and inaccessible area. So, in order to shed some light on this fascinating industry, we’ve compiled the ultimate guide to web design & development and what it takes to become a full-fledged web developer.

We’ll go through the fundamentals of web development in depth in this guide, as well as the skills and resources you’ll need to break into the field. If you decide that web development is for you, the next move is to learn the necessary skills.

But first, we’ll look at the state of the web development industry in 2023 and decide whether it’s a wise career move.

Is It a Good Time to Start a Career as a Web Developer?

It’s crucial to think about your options before starting a new job. Is your new field able to provide you with plenty of opportunities and stability? What are your chances of being employed after completing your chosen programme or bootcamp?

These issues are more critical than ever in the aftermath of 2020. The COVID-19 pandemic has had a significant effect on the economy and employment market, with hiring in many sectors declining. Let’s take a look at the state of the web development industry in 2021 with that in mind.

Are Web Developers in demand right now?

You’ve always noticed that technology is omnipresent in our lives, regardless of what’s going on in the world.

Whether it’s scrolling through our favourite social media apps, checking the news, paying for something online, or interacting with colleagues through collaboration software and resources, technology plays a role in almost everything we do. This technology is supported by a team of web developers who not only created it, but also ensure that it runs smoothly.

In today’s technology-driven world, those who can create and manage websites, applications, and software play a critical role, which is reflected in the web development job market. Web developer employment is expected to rise by 8% between 2019 and 2029, much faster than the average for all occupations, according to the Bureau of Labor Statistics.

But, after the unpredictable twists and turns of 2020, does this still hold true? In a nutshell, yes; web developers seem to have ridden out the storm relatively unscathed.

Full-stack developer was ranked second on Indeed’s list of the best jobs for 2020, and this trend is expected to continue into 2021 and beyond. Web growth, cloud computing, DevOps, and problem-solving are among the most in-demand tech skills in 2023, according to a Google search.

Employers will continue to be drawn to full-stack growth in particular.

Sergio Granada writes for TechCrunch on how full-stack developers were critical to business during the COVID-19 crisis: “As businesses in all sectors move their business to the virtual world in response to the coronavirus pandemic, the ability to do full-stack creation will make engineers highly marketable.

Those who can use full-stack approaches to rapidly create and deliver software projects have the best chance of being at the top of a company’s or client’s wish list.”

Check for “application developer” or “full-stack developer” positions in your field on sites like indeed, glassdoor, and LinkedIn if you want to measure the market for web developers. We ran a fast search for web development jobs in the United States and found over 26,000 openings as of this writing.

As you can see, web developers are still in high demand—perhaps even more so as a result of the ongoing coronavirus pandemic. How has COVID-19 influenced the web development industry, for example? Let’s take a closer look.

How has COVID-19 affected the Industry?

Despite the fact that many companies have suffered (and continue to struggle) as a result of the coronavirus pandemic, the technology industry has done relatively well.

Many businesses are relying on digital technologies to work remotely, emphasising the value of technology and the people who create it. As a result, many are predicting a boom in the industry: Market Data Forecast predicts that the tech industry will rise from $131 billion in 2020 to $295 billion in 2025.

COVID-19 would, of course, bring some updates to current and aspiring web developers. First and foremost, the rise of remote work must be considered. When preparing for your first job in the industry, be prepared to work remotely at least part-time, if not full-time.

Fortunately, web development is a job that can be done from anywhere. This guide will teach you all about what it’s like to work as a remote developer.

We also expect a rise in web developer employment in some markets as a direct result of the most in-demand goods and services right now. Healthcare, media and entertainment, online banking, distance education, and e-commerce, for example, will continue to expand to represent customer desires and habits in a more socially distant environment.

In comparison to other industries, COVID-19 has had a minor influence on the software industry and web developers. Despite the fact that the situation is still developing, new and aspiring web developers should rest assured that they are embarking on a career that is future-proof.

Should You become a Web Developer in 2023?

So, what’s the final word? Is it a good time to start a career as a web developer?

We believe the response is obvious based on the job market and expected employment growth. It’s a great time to start a career as a web developer! Technology is more important than ever in how we function, communicate with loved ones, access healthcare, shop, and so on. The list goes on and on.

We say go for it if you want to be a part of this exciting industry and develop the technology of the future.

But first, let’s go over the fundamentals. What is web development, and what exactly does a web developer do? Continue reading to learn more.

MUST READ: Elements of a Successful Website: Strategy, UX, Web Development & Web Design

What is web development?

The process of creating websites and software for the internet or a private network known as an intranet is known as web creation. Website development isn’t concerned about the aesthetics of a website; rather, it’s more about the coding and programming that makes it work.

All of the tools we use on a daily basis via the internet have been developed by web developers, from the most basic, static web pages to social media platforms and apps, from ecommerce websites to content management systems (CMS).

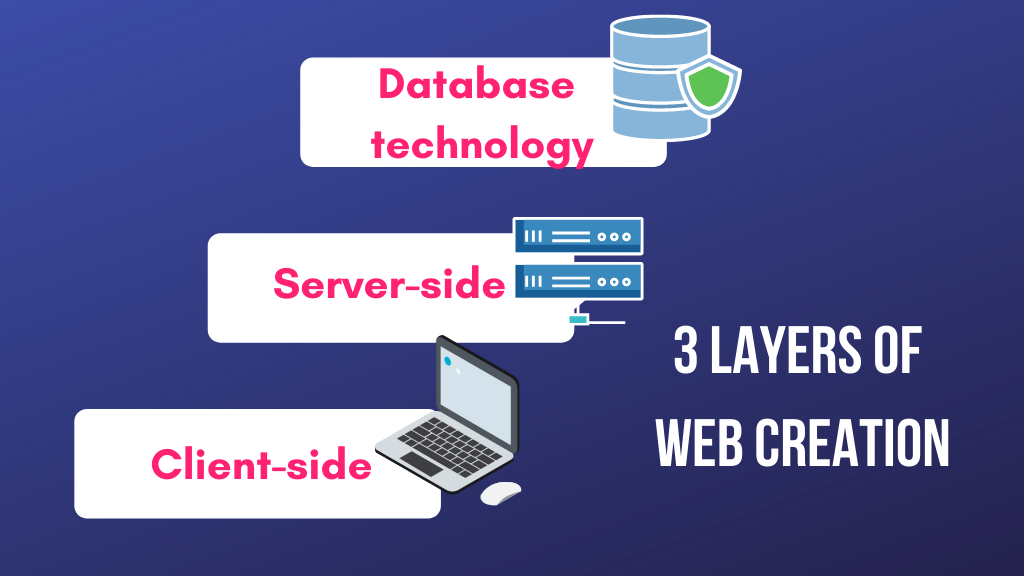

Client-side coding (frontend), server-side coding (backend), and database technology are the three layers of web creation.

Let’s take a closer look at each of these layers.

Client-Side

Anything that the end user interacts with directly is referred to as client-side scripting, or frontend creation. Client-side code runs in a web browser and affects what visitors see when they visit a website. The frontend is in charge of things like layout, fonts, colours, menus, and communication types.

Server-Side

Backend production, also known as server-side scripting, is all about what happens behind the scenes. The backend of a website is the portion of the site that the user does not see. It’s in charge of data storage and organisation, as well as making sure everything on the client side runs smoothly. It accomplishes this by interacting with the frontend.

The browser sends a request to the server if anything happens on the client side, such as when a user fills out a form. The server-side “responds” by sending relevant data to the browser in the form of frontend code, which the browser can interpret and view.

Database Technology

Database technology is also used on websites. The database stores all of the files and content that are required for a website to work, making it easy to retrieve, organise, update, and save them. The database is stored on a server, and most websites employ a relational database management system (RDBMS).

To summarise, the frontend, backend, and database technologies all work together to create and operate a fully functioning website or application, and these three layers make up the web development foundation.

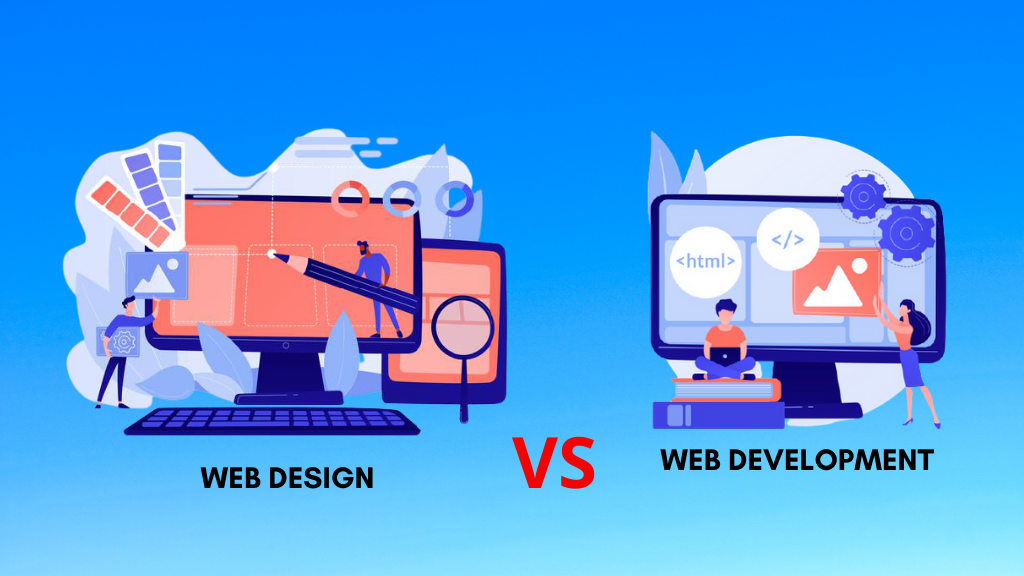

The difference between Web Development and Web Design

Although the terms web development and web design are sometimes used interchangeably, they are not interchangeable.

Consider how a web designer and a web developer could collaborate to create a car:

The developer would be in charge of all the functional components, such as the engine, wheels, and gears, while the designer would be in charge of both the visual aspects of the car, such as how it looks, the layout of the dashboard, and the design of the seats, as well as the user experience provided by the car, such as how smooth it drives.

Site designers create the look and feel of a website. They design the website’s layout, ensuring that it is rational, user-friendly, and enjoyable to use.

They think of all of the visual elements: what colour schemes and fonts will be used, for example. What buttons, drop-down menus, and scrollbars should be present, and where should they be placed? To get from point A to point B, the user interacts with which interactive touchpoints? Site design also takes into account the website’s information architecture, determining what content will be used and where it should be put.

Web design is a wide discipline that is often subdivided into more specific positions including User Experience Design, User Interface Design, and Information Architecture.

It is the responsibility of the web developer to transform this design into a live, fully functioning website. The graphic design provided by the web designer is built by a frontend developer using coding languages such as HTML, CSS, and JavaScript. The site’s more advanced features, such as the checkout feature on an ecommerce site, is built by a backend developer.

In other words, a web designer is an architect and a web developer is a builder or engineer.

MUST READ: Know everything about website design and development

A brief history of the World Wide Web

The internet as we know it today has taken decades to develop. Let’s go back to the beginning and look at how the internet has developed over time to better understand how web creation works.

1965: The First WAN (Wide Area Network)

The internet is basically a network of networks that connects all types of wide area networks (WANs). Broad Area Network (WAN) refers to a telecommunications network that covers a wide geographical area. The first WAN was established at the Massachusetts Institute of Technology in 1965ARPANET was the name given to this WAN later on. It was originally funded by the US Department of Defense’s Advanced Research Projects Agency.

1969: The First Ever Internet Message

UCLA student Charley Kline sent the first internet message in October 1969. He attempted to transmit the word “login” over the ARPANET network to a computer at the Stanford Research Institute, but the machine crashed after the first two messages. However, the machine recovered about an hour later, and the entire text was successfully transmitted.

1970s: The Rise of the LAN (Local Area Network)

Several experimental LAN technologies were developed in the early 1970s. Local Area Network (LAN) is a type of computer network that links devices in the same building, such as in colleges, universities, and libraries. The implementation of Ethernet at Xerox Parc from 1973 to 1974, as well as the development of ARCNET in 1976, are two significant milestones.

1982 – 1989: Transmission Control Protocol (TCP), Internet Protocol (IP), the Domain Name System and Dial-Up Access

The ARPANET protocol, Transmission Control Protocol (TCP) and Internet Protocol (IP), was introduced in 1982, and TCP/IP is still the traditional internet protocol today.

The Domain Name System (DNS) was created in 1983 to provide a more user-friendly method of labelling and designating websites. Cisco shipped its first router in 1987, and World.std.com became the first commercial dial-up internet service in 1989.

1990: Tim Berners-Lee and HTML

HTML — HyperText Markup Language — was created in 1990 by Tim Berners-Lee, a scientist at CERN (the European Organization for Nuclear Research). HTML is, and continues to be, a critical component of the internet.

1991: The World Wide Web Goes Mainstream

The World Wide Web entered the mainstream with the introduction of the visual internet browser. There are more than 4 billion internet users worldwide as of 2018.

What does a Web Developer do?

Web developers can work in-house or freelance, and the roles and responsibilities they perform will differ depending on whether they are frontend, backend, or full stack developers. Full stack developers work on both the frontend and the backend; we’ll go through what a full stack developer does in more depth later.

Web developers are in charge of creating a product that meets both the client’s and the customer’s or end user’s needs. In order to understand the vision: how does the final website look and work, web developers consult with stakeholders, customers, and designers.

In order to continuously refine and enhance a website or framework, a major part of web creation entails finding and fixing bugs. As a result, web developers are skilled problem solvers who are constantly devising new ideas and workarounds to keep things running smoothly.

Of course, all web developers know how to code in a few different languages. However, depending on their job description and field of specialisation, various developers can use different languages. Let’s take a closer look at the various levels of web creation and the activities that go with them.

What does a Frontend Developer do?

The frontend developer’s task is to code the part of a website or application that the user sees and interacts with; in other words, the part of the website that the user sees and interacts with. They take backend data and turn it into something that is easy to understand, appealing to the eye, and completely usable for the average consumer.

They’ll work with the web designer’s designs to bring them to life with HTML, JavaScript, and CSS (more on those later!).

The website’s interface, interactive and navigational elements such as buttons and scrollbars, photos, text, and internal links are all implemented by the frontend developer (links that navigate from one page to another within the same website). Frontend developers are also in charge of ensuring that the site looks good on all browsers and computers.

They’ll code the website to be sensitive or adaptive to different screen sizes, so that the user has the same experience whether they’re on a mobile device, a desktop computer, or a tablet.

Usability checks and bug fixes will also be performed by frontend developers. Simultaneously, they’ll think about SEO best practises, app workflow management, and tools that improve how users communicate with websites in every browser.

Frontend developers can also conduct usability tests and bug fixes. Simultaneously, they’ll consider SEO best practises, app process management, and tools that help users interact more effectively with websites across all browsers.

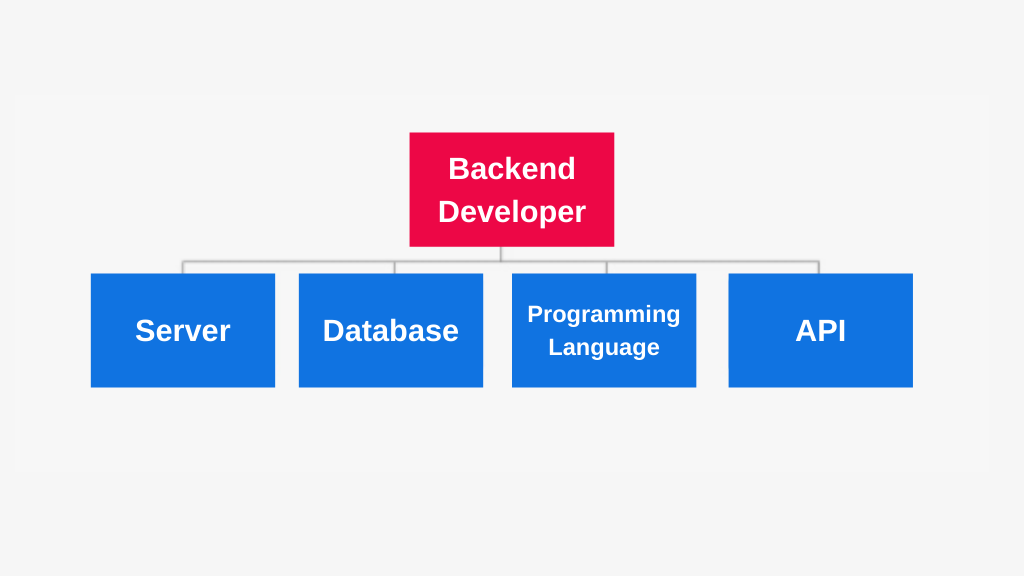

What does a Backend Developer do?

The brains behind the mask are known as the backend (the frontend). A backend developer is in charge of creating and managing the infrastructure that powers the frontend, which is made up of three components: a server, an application, and a database.

Backend developers write code that ensures that all the frontend developer creates is fully functional, and it is their responsibility to ensure that the server, application, and database all interact with one another. So, how do they accomplish this? To begin, they build the application using server-side languages such as PHP, Ruby, Python, and Java.

Then they use tools like MySQL, Oracle, and SQL Server to locate, save, and edit data before returning it to the user via frontend code.

Backend developers, like frontend developers, can communicate with clients or business owners to understand their needs and specifications. They’ll then deliver these in a variety of ways, depending on the project’s details.

Typical backend development tasks include database creation, integration, and management, server-side software development using backend frameworks, content management system development and deployment (for example, for a blog), and working with web server technologies, API integration, and operating systems.

Backend developers are also in charge of checking and debugging every device or application’s backend components.

What does a Full-Stack Developer do?

A full stack developer is someone who is familiar with and capable of working with the “full stack” of technology, which includes both the frontend and backend. Full stack developers are experts at every step of the web development process, which means they can help with strategy and best practises as well as get their hands dirty.

Most full stack developers have accumulated years of experience in a variety of positions, giving them a strong foundation in all aspects of web development. Full stack developers know how to code in both frontend and backend languages and frameworks, as well as how to function in server, network, and hosting environments. They also have a strong understanding of business logic and user interface.

Mobile Developers

Web developers can also specialise in developing mobile apps for iOS or Android.

iOS software developers create applications for the iOS operating system, which is used on Apple devices. Swift is the programming language that Apple developed specifically for their applications, and iOS developers are well-versed in it.

Android app developers create applications that work on all Android devices, including Samsung smartphones. Android’s official programming language is Java.

Programming Languages, Libraries and Frameworks

Web developers use languages, databases, and frameworks to create websites and applications. Let’s take a closer look at each of these, as well as a few other resources that web developers use on a daily basis.

What are Languages?

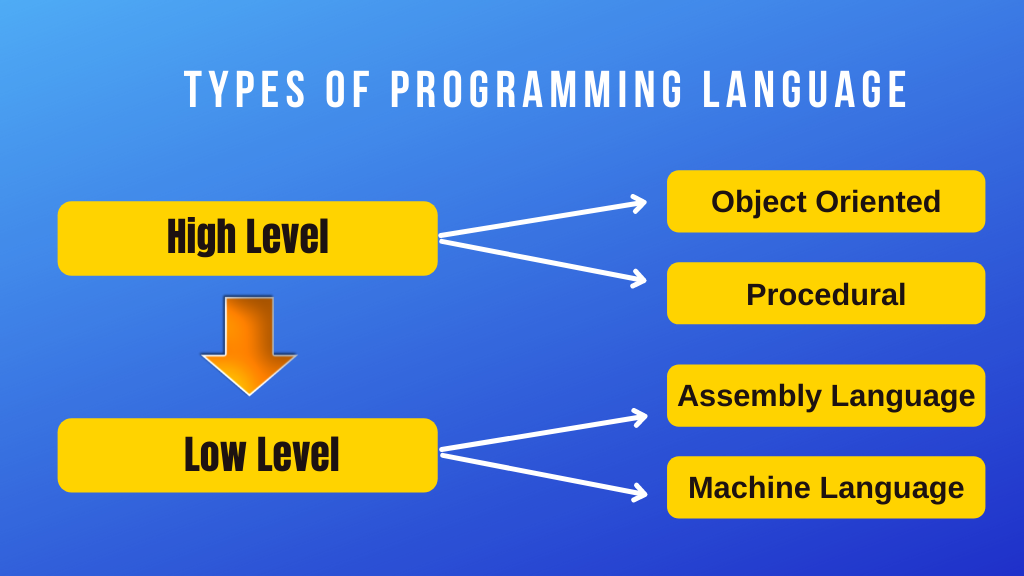

Languages are the building blocks that programmers use to construct websites, applications, and software in the world of web creation. Programming languages, markup languages, style sheet languages, and database languages are among the many types of languages available.

Programming Languages

A programming language is a series of instructions and commands that tell the machine how to achieve a specific result. To write source code, programmers use so-called “high-level” programming languages. Humans can read and understand high-level languages since they use logical words and symbols. Computed and interpreted languages are the two types of high-level languages.

C++ and Java, for example, are compiled high-level languages that are first saved in a text-based format that human programmers can understand but not computers. The source code must be translated to a low-level language, such as machine code, before it can be executed by the computer. The majority of software programmes are written in compiled languages.

Compilation is not needed for interpretive languages like Perl and PHP. Instead, these languages’ source code can be run via an interpreter, which is a programme that reads and executes code. Scripts, such as those used to create content for dynamic websites, are usually run in interpreted languages.

Low-level languages do not need to be interpreted or translated since they can be explicitly understood by and implemented on computer hardware. Low-level languages include things like machine language and assembly language.

Java, C, C++, Python, C#, JavaScript, PHP, Ruby, and Perl are among the most popular programming languages in 2018.

More information: A beginner’s guide to the top ten programming languages

Markup Languages

Markup languages are used to determine how a text file should be formatted. In other words, a markup language instructs software that shows text about how to format the text. The markup tags are not visible in the final production, but they are perfectly legible to the human eye since they contain standard terms.

HTML and XML are the most widely used markup languages. The HyperText Markup Language (HTML) is a programming language that is used to create websites. HTML tags define how a web browser can view a plain text document when they are attached to it. Let’s look at the example of bold tags to see how HTML works. This is how the HTML version will look:

a> b> This sentence should be bolded!/b>

When the browser reads this, it knows that the first sentence should be bolded. What the consumer sees is as follows:

Make this sentence bold!

eXtensible Markup Language is the abbreviation for eXtensible Markup Language. It’s a markup language similar to HTML, but unlike HTML, which was created with the goal of displaying data with an emphasis on aesthetics, XML was created solely for the purpose of storing and transporting data. Unlike HTML, XML tags aren’t predefined; instead, the document’s author creates them.

Since it offers a software and hardware-independent means of storing, transporting, and exchanging data, the aim of XML is to make data sharing and transport, platform updates, and data availability easier. Here’s where you can read more about XML and how it functions.

Style Sheet Languages

A style sheet is a compilation of stylistic guidelines. Style sheet languages, as the name implies, are used to style documents written in markup languages.

Consider an HTML document that is styled with CSS (Cascading Style Sheets), a style sheet language.

HTML is in charge of the web page’s content and layout, while CSS is in charge of how the content should be viewed visually. CSS can be used to style forms as well as add colours, alter fonts, insert backgrounds and borders. CSS is often used to optimise web pages for responsive design, which ensures that they conform to the user’s computer.

Database Languages

Languages are used to develop and maintain databases in addition to creating websites, applications, and apps.

Large amounts of data are stored in databases. The Spotify music app, for example, stores music files as well as information about the user’s listening habits in databases. Similarly, social media applications like Instagram use databases to store user profile information; if a user makes a change to their profile, the app’s database is updated as well.

Since databases aren’t built to understand the same languages as apps are, having a language that they do understand is critical, such as SQL, the standard language for accessing and manipulating relational databases. Structured Query Language (SQL) is an acronym for Structured Query Language. It has its own markup and, in essence, allows programmers to interact with data stored in a database system.

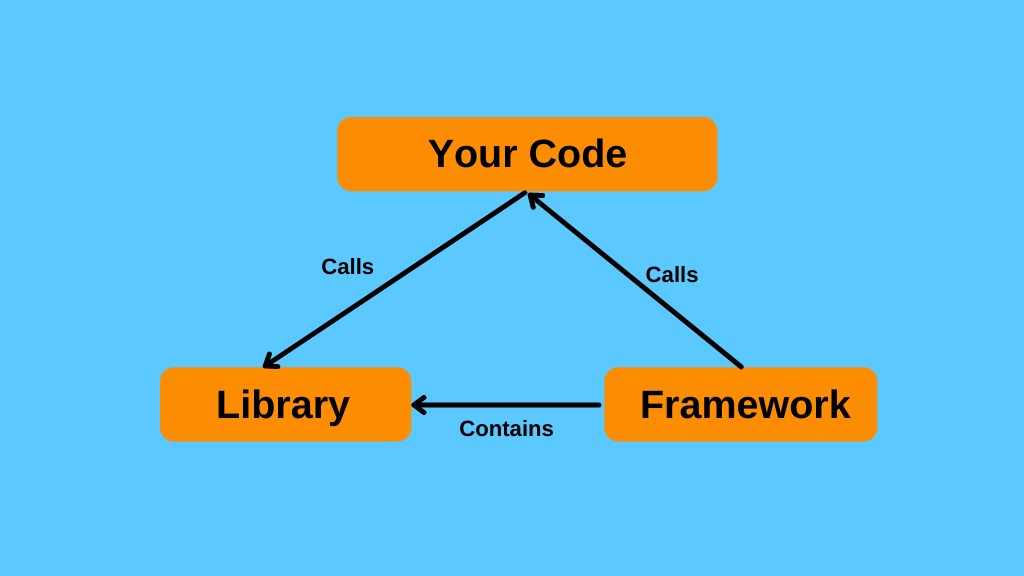

What are Libraries and Frameworks?

Libraries and frameworks are also used by web developers. They are not the same thing, despite the fact that they are both designed to make the developer’s job easier.

Libraries and frameworks are both collections of prewritten code, but libraries are typically smaller and used for a narrower range of applications. A library is a set of useful code that has been grouped together for later reuse. The aim of a library is to allow developers to achieve the same end result while writing less code.

Let’s look at JavaScript as a language and jQuery as a JavaScript library. Rather than writing ten lines of JavaScript code, the developer can use the jQuery library’s simplified, prewritten version, which saves time and effort.

A framework is a collection of ready-to-use components and resources that help programmers write code faster, and several frameworks often have libraries.

It provides a framework for developers to work with, and the framework you select will largely decide how you design your website or app, so picking one is a major decision. Bootstrap, Rails, and Angular are some of the most common frameworks.

Imagine you’re constructing a house to better understand libraries and structures.

The system lays the groundwork and provides structure, as well as guidance and recommendations for performing specific tasks. If you want to instal an oven in your new home, you have two options: purchase the individual components and design the oven yourself, or buy a ready-made oven from the supermarket.

You can either write the code from scratch or use pre-written code from a library and simply paste it, just as you would for a website.

Other Web Development Tools

Web developers can also use a text editor to write their code, such as Atom, Sublime, or Visual Studio Code; a web browser, such as Chrome or Firefox; and, most importantly, Git!

Git is a version control system that allows programmers to manage and store their code. As a web developer, you’ll almost certainly make changes to your code on a regular basis, so a tool like Git that allows you to monitor these changes and undo them if necessary is incredibly useful.

It’s also a lot easier to collaborate with other teams and handle several tasks at the same time with Git. Git has become such a commonplace tool in the web development environment that it’s now considered poor form not to use it.

GitHub, a cloud interface for Git, is another incredibly common method. GitHub includes all of Git’s version control features as well as its own, such as bug tracking, task management, and project wikis.

GitHub not only hosts repositories; it also offers a robust toolkit to developers, making it easier to pursue coding best practises. It is regarded as the go-to location for open-source projects, as well as a showcase for web developers’ abilities. You can read more about GitHub’s significance here.

What does it take to Become a Web Developer?

A career in web development is both exciting and financially lucrative, with plenty of job security. Web developers are expected to rise by 15% between 2016 and 2026, far faster than the national average, according to the Bureau of Labor Statistics, and web developer is ranked as the 8th best job title in tech in terms of salary and jobs rates.

The average annual salary for a web developer in the United States is $76,271 at the time of publishing. Naturally, pay varies based on venue, years of experience, and the unique skills you bring to the table; learn more about how much a web developer can receive here.

Learning the requisite languages, libraries, and frameworks is the first step toward a career in web development. You’ll also need to learn how to use some of the resources listed above, as well as some basic terminology.

It all depends on whether you want to concentrate on frontend or backend creation when it comes to the languages you study. All web developers, however, should be familiar with HTML, CSS, and JavaScript, so start there.